and I will post there instead of here.

Sunday, November 16, 2014

Sunday, October 5, 2014

Berkson's paradox

Berkson's paradox is a counter-intuitive result in probability and statistics. Imagine that we have two independent events A and B. By definition of independence, the conditional probability of event A given B is the same as the probability of event A:

P(A | B) = P(A).

i.e., knowing that event B occurred gives us no information about the probability that A occurred.

Berkson's paradox is that, if we restrict ourselves to the cases where events A or B occur-- where least one of the events A or B occurs-- knowledge that B has occurred makes it less likely that A has occurred:

P(A | B, A or B) < P(A | A or B) *.

The reason that this result is counter-intuitive is that A and B are independent events, but they become negatively dependent on each other when we restrict ourselves to the cases that A or B occurs. We will see that Berkson's paradox is a form of selection bias; in restricting ourselves to A or B, we ignore the cases where both A and B do not occur.

Berkson's paradox can be used to explain the stereotype that the most handsome men are jerks and that the nicest men are ugly, proposed by Jordan Ellenberg in his book How Not to Be Wrong.

Let's assume for the moment that looks and niceness are independent variables in the population of males so that men are randomly distributed on the looks-niceness plane:

So each guy is a point in this plane. Every girl wants to date a guy in the top right corner of this plot**: a man that is both handsome and nice. However, if a guy is a jerk sometimes, she might still date him if he is super good-looking. Also, if a guy is extremely nice, she might still date him even if he is lacking in the looks category. Thus, the guys that she is willing to date are probably where:

niceness + looks > some constant value,

the green points in the upper-right corner:

From this natural compromising behavior in her dating criterion, many of the best-looking guys that this girl dates are not so nice; many of the nicest guys she dates are not as good-looking. By restricting herself to this set of guys, she sees a negative correlation between looks and niceness, despite these two variables being independent in the population! This is Berkson's paradox, and now you can see that this induced correlation stems from selection bias.

We can go one step further: maybe the guys in the very top-right corner (red points) are so nice and handsome that they will not consider dating the girl we are considering, who is just decently nice and good-looking.

Now her dating pool is even more restricted due to selection bias, and the negative correlation between good looks and niceness is even more severe.

The lesson here is that we can see spurious correlations between variables as a result of selection bias. We must think carefully about if our experiences and data collection strategies adequately sample the population in question to make sound conclusions from our observations.

The paradox is named after Joseph Berkson, who pointed out a selection bias in case-control studies to identify causal risk factors for a disease. If the control group is taken from within the hospital, a negative correlation could arise between the disease and the risk factor because of different hospitalization rates among the control and case sample.

Another example comes from the book Causality: Models, Reasoning, and Inference by Judea Pearl regarding university admissions criteria. The admissions office at a university may consider both GPA and SAT scores for admissions. Of course, the university wants students with both a high GPA and high SAT. However, a schools may still admit a student with a poor GPA if he or she has a very high SAT score and admit a student with a poor SAT score if he or she compensates with a high GPA. Even if the GPA and SAT scores were independent variables, this selection bias could induce a negative correlation between GPA and SAT scores among the student body.

[1] Berkson, Joseph (June 1946). "Limitations of the Application of Fourfold Table Analysis to Hospital Data". Biometrics Bulletin 2 (3): 47–53

* We need 0 < P(A), P(B) < 1 for this. That is, the events have to be interesting enough that A/B do not occur with certainty or never.

** Of course, niceness and handsomeness are [hopefully] not the only variables that a girl will consider in the guys that she dates. Please excuse this simplification.

P(A | B) = P(A).

i.e., knowing that event B occurred gives us no information about the probability that A occurred.

Berkson's paradox is that, if we restrict ourselves to the cases where events A or B occur-- where least one of the events A or B occurs-- knowledge that B has occurred makes it less likely that A has occurred:

P(A | B, A or B) < P(A | A or B) *.

The reason that this result is counter-intuitive is that A and B are independent events, but they become negatively dependent on each other when we restrict ourselves to the cases that A or B occurs. We will see that Berkson's paradox is a form of selection bias; in restricting ourselves to A or B, we ignore the cases where both A and B do not occur.

Berkson's paradox can be used to explain the stereotype that the most handsome men are jerks and that the nicest men are ugly, proposed by Jordan Ellenberg in his book How Not to Be Wrong.

Let's assume for the moment that looks and niceness are independent variables in the population of males so that men are randomly distributed on the looks-niceness plane:

So each guy is a point in this plane. Every girl wants to date a guy in the top right corner of this plot**: a man that is both handsome and nice. However, if a guy is a jerk sometimes, she might still date him if he is super good-looking. Also, if a guy is extremely nice, she might still date him even if he is lacking in the looks category. Thus, the guys that she is willing to date are probably where:

niceness + looks > some constant value,

the green points in the upper-right corner:

We can go one step further: maybe the guys in the very top-right corner (red points) are so nice and handsome that they will not consider dating the girl we are considering, who is just decently nice and good-looking.

Now her dating pool is even more restricted due to selection bias, and the negative correlation between good looks and niceness is even more severe.

The lesson here is that we can see spurious correlations between variables as a result of selection bias. We must think carefully about if our experiences and data collection strategies adequately sample the population in question to make sound conclusions from our observations.

The paradox is named after Joseph Berkson, who pointed out a selection bias in case-control studies to identify causal risk factors for a disease. If the control group is taken from within the hospital, a negative correlation could arise between the disease and the risk factor because of different hospitalization rates among the control and case sample.

Another example comes from the book Causality: Models, Reasoning, and Inference by Judea Pearl regarding university admissions criteria. The admissions office at a university may consider both GPA and SAT scores for admissions. Of course, the university wants students with both a high GPA and high SAT. However, a schools may still admit a student with a poor GPA if he or she has a very high SAT score and admit a student with a poor SAT score if he or she compensates with a high GPA. Even if the GPA and SAT scores were independent variables, this selection bias could induce a negative correlation between GPA and SAT scores among the student body.

[1] Berkson, Joseph (June 1946). "Limitations of the Application of Fourfold Table Analysis to Hospital Data". Biometrics Bulletin 2 (3): 47–53

* We need 0 < P(A), P(B) < 1 for this. That is, the events have to be interesting enough that A/B do not occur with certainty or never.

** Of course, niceness and handsomeness are [hopefully] not the only variables that a girl will consider in the guys that she dates. Please excuse this simplification.

Thursday, July 31, 2014

Frequentist vs. Bayesian Statistics

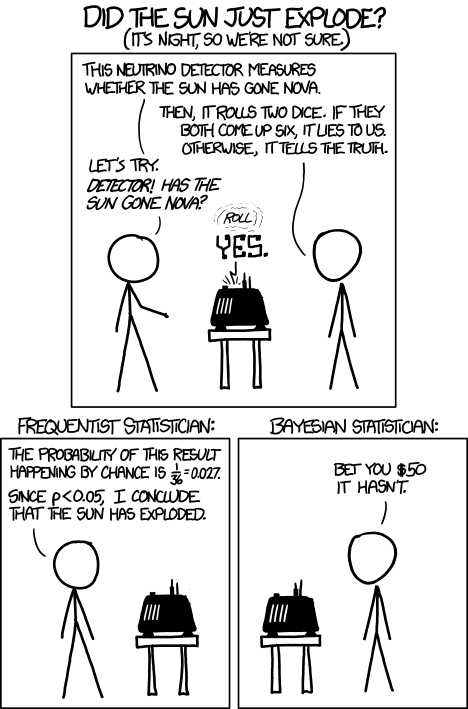

Two approaches to problems in the world of statistics and machine learning are that of frequentist and Bayesian statistics. This comic from XKCD illustrates a difference between the two viewpoints.

We have a neutrino detector that measures whether the sun has gone nova. The detector is not perfect; 1/36 times, the detector incorrectly indicates whether or not the sun has gone nova. [The probability of rolling two fair die as both 6 is 1/6 * 1/6 = 1/36.]

Next, we push the button on the detector to try it, and it indicates that the sun has gone nova. Has the sun indeed exploded? [The sun is shining on the other side of the earth, and there is a time lag for the light from the sun to reach us.]

The frequentist might make the following point. The probability that the detector would lie to us is 1/36. That's pretty small...

The Bayesian statistician cannot argue with this point. However, and this is the distinction between the two mindsets, the Bayesian statistician would take his or her prior knowledge into account that the probability of the sun exploding today is very small, so most likely the detector is lying, in spite of the fact that the detector is unlikely to lie.

Let's put this into some formal mathematics. Define two events:

E: the sun has exploded

D: the neutrino detector has detected an explosion.

We know that the probability that the detector has detected an explosion given that there was not actually an explosion is P(D | ~E) = 1/36. The quantity that we're interested in for making this bet is the probability that there was actually an explosion given the data that the detector has detected an explosion, P(E | D). A fancy name for this probability is the posterior probability.

To get the posterior P(E | D), we need to use Bayes' theorem:

P(E | D) = P(D | E) P(E) / P(D).

The term P(D | E) is called the likelihood; this is the probability of observing the data given the event. Frequentists focus on the likelihood when determining whether or not an event has occurred. In this case,

P(D | E) = 1 - P(~D | E) = 1 - 1/36 = 35/36.

The likelihood is quite high, so the frequentist might conclude that the sun indeed exploded.

However, of course, Bayes' theorem tells us that there is another contribution to the story: the term P(E), which is called the prior. This is simply the probability that the sun has exploded. We know that this is indeed very small, and the Bayesian statistician would inject this "prior knowledge" into the problem, hence taking the prior probability P(E) into account in determining P(E | D).

The probability of the detector detecting an explosion P(D) is somewhat redundant since we can write it in terms of other quantities:

P(D) = P(D | E) P(E) + [1 - P(D | E)] [1 - P(E)].

... = 34/36 P(E) + 1/36.

Note that this term goes to 1/36 as P(E) gets small and is 35/36 if P(E) = 1 (i.e. if the sun exploded with certainty) .

Thus, we should write the probability that the sun actually exploded, given our evidence that the neutrino detector went off,

P(E | D) = 35/36 P(E) / ( 34/36 P(E) + 1/36).

At small prior probabilities, this looks like

P(E | D) ~ 35 P(E).

The Bayesian statistician knows that the astronomically small prior P(E) overwhelms the high likelihood P(D | E).

In this problem, we clearly have a reason to inject our belief/prior knowledge that P(E) is very small, so it is very easy to agree with the Bayesian statistician. However, in many cases, it is difficult or unjustified to assume prior knowledge P(E).

This bet is quite moot anyway. Consider the "loss function": if the Bayesian statistician is correct, you lose $50. If the Bayesian statistician is incorrect, the world will end and you probably won't have a chance to spend the $50.

Next, we push the button on the detector to try it, and it indicates that the sun has gone nova. Has the sun indeed exploded? [The sun is shining on the other side of the earth, and there is a time lag for the light from the sun to reach us.]

The frequentist might make the following point. The probability that the detector would lie to us is 1/36. That's pretty small...

The Bayesian statistician cannot argue with this point. However, and this is the distinction between the two mindsets, the Bayesian statistician would take his or her prior knowledge into account that the probability of the sun exploding today is very small, so most likely the detector is lying, in spite of the fact that the detector is unlikely to lie.

Let's put this into some formal mathematics. Define two events:

E: the sun has exploded

D: the neutrino detector has detected an explosion.

We know that the probability that the detector has detected an explosion given that there was not actually an explosion is P(D | ~E) = 1/36. The quantity that we're interested in for making this bet is the probability that there was actually an explosion given the data that the detector has detected an explosion, P(E | D). A fancy name for this probability is the posterior probability.

To get the posterior P(E | D), we need to use Bayes' theorem:

The term P(D | E) is called the likelihood; this is the probability of observing the data given the event. Frequentists focus on the likelihood when determining whether or not an event has occurred. In this case,

P(D | E) = 1 - P(~D | E) = 1 - 1/36 = 35/36.

The likelihood is quite high, so the frequentist might conclude that the sun indeed exploded.

However, of course, Bayes' theorem tells us that there is another contribution to the story: the term P(E), which is called the prior. This is simply the probability that the sun has exploded. We know that this is indeed very small, and the Bayesian statistician would inject this "prior knowledge" into the problem, hence taking the prior probability P(E) into account in determining P(E | D).

The probability of the detector detecting an explosion P(D) is somewhat redundant since we can write it in terms of other quantities:

P(D) = P(D | E) P(E) + [1 - P(D | E)] [1 - P(E)].

... = 34/36 P(E) + 1/36.

Note that this term goes to 1/36 as P(E) gets small and is 35/36 if P(E) = 1 (i.e. if the sun exploded with certainty) .

Thus, we should write the probability that the sun actually exploded, given our evidence that the neutrino detector went off,

P(E | D) = 35/36 P(E) / ( 34/36 P(E) + 1/36).

At small prior probabilities, this looks like

P(E | D) ~ 35 P(E).

The Bayesian statistician knows that the astronomically small prior P(E) overwhelms the high likelihood P(D | E).

In this problem, we clearly have a reason to inject our belief/prior knowledge that P(E) is very small, so it is very easy to agree with the Bayesian statistician. However, in many cases, it is difficult or unjustified to assume prior knowledge P(E).

This bet is quite moot anyway. Consider the "loss function": if the Bayesian statistician is correct, you lose $50. If the Bayesian statistician is incorrect, the world will end and you probably won't have a chance to spend the $50.

Sunday, July 20, 2014

Solution to the card-deck challenge

Hello again for part 2 of my guest appearance.

You guys are fantastic! Cory and I were very impressed by your suggestions and creativity at solving the card-deck challenge:

Five random cards are drawn from a deck of 52 cards (four suits and 13 cards per suit). You see all five cards and can decide which four of those cards you want to reveal to your partner, and in which order. Is there an ordering scheme that you and your partner can agree on beforehand that allows your partner to determine the fifth (hidden) card from the choice and order of the four cards that you reveal?

The three correct solutions that we received were from Douglas, James, and Vince. Congratulations to all three of you!

If you want to solve it yourself, this is your last chance. I will reveal the solution below this cute lolcat:

Okay, here we go. Surprisingly, such a scheme can be found, and it's actually easy enough to memorize so that you can perform this at your next dinner party. Below I explain one possible scheme, and there are certainly many other conventions that would work just as well.

As a reminder, all we have to choose is which card we hide and want our partner to guess, let's call this card the target, and in what order we want to reveal the other four cards.

We need to signal the suit and the value of the target card. The former is easy: Since we are given five cards of a deck of four suits, we can employ one of my favourite "you don't say" math theorems, the Pigeonhole principle, to realize that there will always be a pair of cards with the same suit. Hence:

Rule 1: The first card that you reveal is the same suit as the target (the card that will be hidden).

So far so good, by revealing one card we reduce the number of possible targets from 48 (52 - 4 revealed cards) to 12 (13 - 1 revealed card of the same suit).

To signal the rank is harder, because in the worst case we don't have any influence about which three cards Rule 1 leaves us with. So we have to assume these three cards are entirely random. All right, how many ways do we have to order three random cards? That's 6, because there are three choices for which card to show first, each of those gives us two choices of which card to show second, and the last card is determined by that: 3*2 = 6. This means that we can signal any number 1, 2, 3, 4, 5, or 6. A simple scheme of how to signal which number exactly is below, all we need to know at this point is that six different patterns is all the three middle cards can give us.

Rule 2: Use the three middle cards to signal the distance in rank between the first card and the target.

That's almost the entire trick, but there is one more idea required to reduce the number of possible targets from 12 to 6. To begin with, we realize that Rule 1 just says that one of the two cards of the same suit needs to be revealed first, but it does not state which one. For example, we could agree to always show the lower of the two cards, and then using the three middle cards to signal how many ranks higher the target is. This works well in half of the cases: Whenever the higher card is at most six ranks higher than the lower card. For example, given these five cards

we would choose the target to be ♣K, the first card to be ♣8, and the three remaining cards to signal a five, since the King is five ranks higher than the Eight.

And what do we do when the two cards of the same suit are more than six apart? The beautiful answer is: They are not! At least not modulo 13:

It turns out that the distance between any two of the 13 cards of the same suit is at most 6 (=floor(13/2)), if you continue counting from the beginning once you hit the Ace. For example, the distance between Q and 3 is four, the distance between J and 4 is six.

Rule 3: Choose which of the two possible first cards to show (Rule 1) such that the targets' rank is at most 6 (modulo 13) ranks above the first card.

And that's all there is to it. We are now able to choose four cards in such a way that our partner will be able to calculate the remaining fifth card. For example:

Given ♣2, ♣A, ♠Q, ♡3, ♡Q, we can either play for clubs and show ♣A first and signal one, or play for hearts, show ♡Q first and signal four.

As a final step, here is a scheme of how use the three middle cards to signal the number 1, 2, 3, 4, 5 or 6: Start with choosing an order for all 52 cards. For example: The higher the rank, the higher the card, and if the rank is the same, then use the suit order ♣ > ♠ > ♡ > ♢. Once we agree on the order of the three cards, low, mid, high, it is easy to translate that into the numbers from 1 to 6:

low, mid, high -> 1

low, high, mid -> 2

mid, low, high -> 3

mid, high, low -> 4

high, low, mid -> 5

high, mid, low -> 6

Concluding examples

1) Given these five cards, ♣8, ♣K, ♠Q, ♡6, ♢Q, which cards should we reveal and in which order?

According to Rule 1, the first and target card will be a club. The ♣K is 5 ranks higher than the ♣8, while the ♣8 is 8 ranks higher than the ♣K. Hence, according to Rule 3, we first reveal ♣8 and let ♣K be the target. With the remaining cards ♠Q, ♡6, ♢Q we have to signal 5, and hence reveal the highest card second, ♠Q, then the lowest, ♡6, and as fourth card the middle, ♢Q.

2) Given the cards in the initial screenshot, the target card must be ♢A, because that's the spade which is one (the three middle cards are sorted in low, mid, high) above the ♢K.

Addendum:

A similar, yet distinct, solution is the following: Ignoring Rule 1 and the suits gives us 4*3*2 = 24 ways to reveal four well-ordered cards. We reveal 4 of the 52 cards, leaving us with 48 possible targets. Since two cards can be at most 48/2 = 24 ranks apart (use a larger version of the circle and calculate modulo 48) we can agree that the target card is 1, 2, ..., or 24 ranks higher than the highest revealed card.

You guys are fantastic! Cory and I were very impressed by your suggestions and creativity at solving the card-deck challenge:

Five random cards are drawn from a deck of 52 cards (four suits and 13 cards per suit). You see all five cards and can decide which four of those cards you want to reveal to your partner, and in which order. Is there an ordering scheme that you and your partner can agree on beforehand that allows your partner to determine the fifth (hidden) card from the choice and order of the four cards that you reveal?

The three correct solutions that we received were from Douglas, James, and Vince. Congratulations to all three of you!

If you want to solve it yourself, this is your last chance. I will reveal the solution below this cute lolcat:

Okay, here we go. Surprisingly, such a scheme can be found, and it's actually easy enough to memorize so that you can perform this at your next dinner party. Below I explain one possible scheme, and there are certainly many other conventions that would work just as well.

As a reminder, all we have to choose is which card we hide and want our partner to guess, let's call this card the target, and in what order we want to reveal the other four cards.

We need to signal the suit and the value of the target card. The former is easy: Since we are given five cards of a deck of four suits, we can employ one of my favourite "you don't say" math theorems, the Pigeonhole principle, to realize that there will always be a pair of cards with the same suit. Hence:

Rule 1: The first card that you reveal is the same suit as the target (the card that will be hidden).

So far so good, by revealing one card we reduce the number of possible targets from 48 (52 - 4 revealed cards) to 12 (13 - 1 revealed card of the same suit).

To signal the rank is harder, because in the worst case we don't have any influence about which three cards Rule 1 leaves us with. So we have to assume these three cards are entirely random. All right, how many ways do we have to order three random cards? That's 6, because there are three choices for which card to show first, each of those gives us two choices of which card to show second, and the last card is determined by that: 3*2 = 6. This means that we can signal any number 1, 2, 3, 4, 5, or 6. A simple scheme of how to signal which number exactly is below, all we need to know at this point is that six different patterns is all the three middle cards can give us.

Rule 2: Use the three middle cards to signal the distance in rank between the first card and the target.

That's almost the entire trick, but there is one more idea required to reduce the number of possible targets from 12 to 6. To begin with, we realize that Rule 1 just says that one of the two cards of the same suit needs to be revealed first, but it does not state which one. For example, we could agree to always show the lower of the two cards, and then using the three middle cards to signal how many ranks higher the target is. This works well in half of the cases: Whenever the higher card is at most six ranks higher than the lower card. For example, given these five cards

|

| Following Rule 1, we have to choose one of the clubs as the target. Choosing ♣K as target we can use the non-clubs to signal that the target is five ranks above the ♣8, which is possible by Rule 2. |

we would choose the target to be ♣K, the first card to be ♣8, and the three remaining cards to signal a five, since the King is five ranks higher than the Eight.

And what do we do when the two cards of the same suit are more than six apart? The beautiful answer is: They are not! At least not modulo 13:

|

| No two cards can be more than six ranks apart (modulo 13). |

It turns out that the distance between any two of the 13 cards of the same suit is at most 6 (=floor(13/2)), if you continue counting from the beginning once you hit the Ace. For example, the distance between Q and 3 is four, the distance between J and 4 is six.

Rule 3: Choose which of the two possible first cards to show (Rule 1) such that the targets' rank is at most 6 (modulo 13) ranks above the first card.

And that's all there is to it. We are now able to choose four cards in such a way that our partner will be able to calculate the remaining fifth card. For example:

Given ♣2, ♣A, ♠Q, ♡3, ♡Q, we can either play for clubs and show ♣A first and signal one, or play for hearts, show ♡Q first and signal four.

|

| We can choose if we want to let ♣2 or ♡3 be the target. |

As a final step, here is a scheme of how use the three middle cards to signal the number 1, 2, 3, 4, 5 or 6: Start with choosing an order for all 52 cards. For example: The higher the rank, the higher the card, and if the rank is the same, then use the suit order ♣ > ♠ > ♡ > ♢. Once we agree on the order of the three cards, low, mid, high, it is easy to translate that into the numbers from 1 to 6:

low, mid, high -> 1

low, high, mid -> 2

mid, low, high -> 3

mid, high, low -> 4

high, low, mid -> 5

high, mid, low -> 6

Concluding examples

1) Given these five cards, ♣8, ♣K, ♠Q, ♡6, ♢Q, which cards should we reveal and in which order?

According to Rule 1, the first and target card will be a club. The ♣K is 5 ranks higher than the ♣8, while the ♣8 is 8 ranks higher than the ♣K. Hence, according to Rule 3, we first reveal ♣8 and let ♣K be the target. With the remaining cards ♠Q, ♡6, ♢Q we have to signal 5, and hence reveal the highest card second, ♠Q, then the lowest, ♡6, and as fourth card the middle, ♢Q.

2) Given the cards in the initial screenshot, the target card must be ♢A, because that's the spade which is one (the three middle cards are sorted in low, mid, high) above the ♢K.

|

The hidden target card is ♢A, because that's the spade one rank higher than the first card ♢K.

|

Addendum:

A similar, yet distinct, solution is the following: Ignoring Rule 1 and the suits gives us 4*3*2 = 24 ways to reveal four well-ordered cards. We reveal 4 of the 52 cards, leaving us with 48 possible targets. Since two cards can be at most 48/2 = 24 ranks apart (use a larger version of the circle and calculate modulo 48) we can agree that the target card is 1, 2, ..., or 24 ranks higher than the highest revealed card.

Monday, July 7, 2014

A card-deck challenge

Hello Mathemathinking readers,

today's post is a bit special in two ways: First, I'm not Cory. My name is Bernhard, and I was Cory's Math office mate at UBC in Vancouver.

Several times Cory and I chatted about writing a blog together, and I'm happy to finally contribute to his page. In fact, it will be a double header: I want to challenge you to a fun quiz, and will give a week's time to find a solution, before I reveal my answer. Email full solutions to me, or discuss the problem in the comments below (hints are welcome, but please don't post full solutions in the comments). Ready? Here we go:

You need a partner, and a standard 52-card deck with four suits and 13 cards per suit (no jokers). Five random cards are drawn from the deck. While you see none of the cards, your partner is allowed to view all five. Your partner then decides which four of those cards she wants to reveal to you and in which order. Is there an ordering scheme that you and your partner can agree on beforehand that allows you to determine the fifth (hidden) card from the choice and order of the four cards that your partner shows?

If you think that's always possible, explain your scheme.

Otherwise, explain why such a general algorithm can not exist.

To clarify: Since your partner can choose which four cards to show, he also chooses the card you have to guess. Also, all the information you get is the rank and the suit of the four cards, and the order in which they are revealed. There is no additional information in the way the cards are revealed (eg your partner can not flip some of the cards to signal additional information).

Good luck, I look forward to your answers!

today's post is a bit special in two ways: First, I'm not Cory. My name is Bernhard, and I was Cory's Math office mate at UBC in Vancouver.

|

| Janelle, Cory and me at a hike last summer |

Several times Cory and I chatted about writing a blog together, and I'm happy to finally contribute to his page. In fact, it will be a double header: I want to challenge you to a fun quiz, and will give a week's time to find a solution, before I reveal my answer. Email full solutions to me, or discuss the problem in the comments below (hints are welcome, but please don't post full solutions in the comments). Ready? Here we go:

You need a partner, and a standard 52-card deck with four suits and 13 cards per suit (no jokers). Five random cards are drawn from the deck. While you see none of the cards, your partner is allowed to view all five. Your partner then decides which four of those cards she wants to reveal to you and in which order. Is there an ordering scheme that you and your partner can agree on beforehand that allows you to determine the fifth (hidden) card from the choice and order of the four cards that your partner shows?

If you think that's always possible, explain your scheme.

Otherwise, explain why such a general algorithm can not exist.

|

| Can you find a scheme that uniquely determines the fifth hidden card? |

Good luck, I look forward to your answers!

Monday, June 9, 2014

Voronoi cookies and the Post Office Problem

In an attempt to bake cookies, I failed to place the balls of dough far enough apart and, instead, a cookie cake formed. The result resembles a very useful diagram in mathematics called a Voronoi tessellation.

What I was excited about when I pulled this out of the oven was the boundary that formed between the regions intended to be separate cookies. The regions of space circumscribed around these boundaries (the "intended cookies") are called Voronoi cells. I drew the lines that approximately partition the area of the pan into Voronoi cells. You can also imagine the center of the balls of cookie dough that I placed in the pan before I put it in the oven, marked as red points that I will call sites $p_k$.

In a Voronoi diagram, all points on these lines are equidistant from the two sites of the adjoining Voronoi cells. All points inside the regions circumscribed by these lines are thus closer to the site of that Voronoi cell than any other Voronoi cell sites.

This leads to the mathematical definition of a Voronoi cell or region $R_k$ of a site $p_k$ (the cookie centers) as all points $x$ that are closer to site $p_k$ than any other site:

$R_k:=\{x : d(x,p_k) < d(x,p_j)$ for all $j \neq k\}$.

Here, $d(x,p_k)$ is notation for the distance between point $x$ and site $p_k$.

How are Voronoi cells useful? Consider a classic optimization problem called the Post Office Problem. A new city has just built 10 post offices. With the aim of reducing the cost of delivering mail, how can we optimally assign each residence a post office from which to receive mail?

Assuming that the density of residences is spatially uniform, we seek to assign each house to the nearest post office*. Thinking of the location of the post offices as sites $p_k$, the solution to the optimization problem is to assign all houses in the Voronoi cell $R_k$ to post office $k$. This is because each point in the Voronoi cell $k$ is by definition closest to post office $k$ (located at $p_k$) than any other post office.

Another amusing story is that of a physician John Snow, who in London in 1854, suggested with a Voronoi diagram that Cholera was being spread by drinking water instead of through the air, as thought at the time. He plotted the Cholera cases on a map of London and imagined the water pumps as sites $p_k$, drawing the Voronoi diagram of these sites. He noticed that the victims appeared within a Voronoi cell of a certain [infected] water pump. The assumption here is that people get their water primarily from the closest water pump. This suggested that instead Cholera is water-borne and identified the problematic water source [1].

In my research group at Berkeley, we use Voronoi diagrams to model the pore space of nanoporous materials [2]. This geometrical representation of a material helps us search for materials that are suitable for applications such as storing natural gas for vehicular fuel tanks and separating carbon dioxide from the flue gas of coal-fired power plants.

In machine learning, the branch of artificial intelligence that enables self-driving cars and the recommendation algorithms of Netflix, one supervised classification algorithm uses the Voronoi network, called nearest-neighbor classification. Let's say Netflix has a vector of data about you, including your age, gender, and what movies you've watched/how you've rated them. This is some representation of you in higher dimensional space. One strategy for recommending your next movie might be to simply find the person that is most like you in their database, see what movies that person likes, and recommend these movies to you. This corresponds to finding the site $p_k$ (representing the person most like you) in high dimensional space such that you lie in the Voronoi cell $R_k$.

My colleague at Berkeley, upon reading this post, mentioned that she uses Voronoi tessellations to characterize how polymers interact with water, aiding in the identification of polymers for drug delivery and lubricants.

Finally, why did the cookie cake form a Voronoi diagram? I think that one can prove that baking cookies will form a Voronoi network if you assume that each cookie expands from its center at a constant, uniform rate and stops expanding when it meets the surface of another cookie. The points where the boundaries of the intended cookies meet will form the lines in the Voronoi diagram. My cookie cake is not a perfect Voronoi diagram because a) each cookie was not initially the same shape and b) the boundary of the pan does not allow for a radially symmetric cookie expansion.

* The problem is realistically more complicated because mailmen must take roads, and these are not straight from the post office to the residences. Still, this may be a reasonable approximation.

[1] http://plus.maths.org/content/uncovering-cause-cholera

[2] Marielle Pinheiro, Richard L. Martin, Chris H. Rycroft, Andrew Jones, Enrique Iglesia, Maciej Haranczyk, Characterization and comparison of pore landscapes in crystalline porous materials, Journal of Molecular Graphics and Modelling, Volume 44, July 2013, Pages 208-219,

Thursday, May 22, 2014

Feel like your lovers had more lovers than you?

Most people have had fewer lovers than their lovers have, on average.

The fact that sex is symmetric makes this seem paradoxical. If person X slept with person Y, of course person Y slept with person X. So, how can we possibly expect that a given person has had fewer lovers than their lovers?

The answer is that you are more likely to have sex with someone that has had sex with a lot of people. Let's say person X has slept with 25 people, whereas person Y has slept with one. Then, [if you choose your partners at random] you are 25 times as likely to have slept with person X than person Y.

This is a form of sampling bias. As individuals, we are more likely to sample [sleep with] those that are more sexually active than others in the population, and this causes us to observe an average higher than the true network average.

In the extreme case, if person Z is a virgin, he or she cannot possibly count towards anyone's sample to determine the average number of lovers!

This idea of sampling bias is behind the reason "why people experience airplanes, restaurants, parks and beaches to be more crowded than the averages would suggest. When they’re empty, nobody’s there to notice." [Steven Strogatz, Ref. 1]

The paradoxical phenomena that most people have had fewer lovers than their lovers have also holds for our friendships. Your friends likely have more friends than you -- this is the "the friendship paradox" discovered by Scott Feld [2]. A study analyzing the social network of friends on Facebook (Ref. 3) showed that 93% of people on Facebook have fewer friends than the average of their friends's number of friends (Ref. 1)!

Similarly, if you are in academia, your coauthors likely have more publications and more citations than you do (Ref. 4); you are more likely to have published with someone who has many publications than someone who has only one.

Not only is the friendship paradox interesting, it is useful. During the H1N1 outbreak in 2009, researchers monitored the health of two disparate sets of students at Harvard University (Ref. 5). The first group consisted of randomly selected individuals. The second group consisted of a group of friends of the individuals in the first group. They found that the progression of the flu epidemic in the second group occurred two weeks earlier than the first! This is because those in the second group are more likely to have more friends than those in the first group by the friends paradox and hence be in contact with more individuals/get the flu earlier. This is a clever sampling method to sense a disease outbreak earlier than observing randomly selected individuals: instead observe the friends of randomly selected individuals (Ref. 5).

----------

Finally, what you've all been waiting for. A proof of the friends paradox. A network of friends can be represented as an undirected graph $G(V,E)$. This graph $G$ consists of a set $V$ of vertices, representing people, and a set $E$ of edges, representing friendships. An edge connects two vertices.

The only notation we need is:

$d(v)$: the degree of vertex $v$. (The number of friends that the person represented by the vertex $v$ has)

$|V|$: the total number of vertices (The number of people in our network)

$|E|$: the total number of edges in our network (The number of friendships).

What is the expected number of friends a person has in our network? This corresponds to the expected value of $d(v)$ of a randomly chosen person in the network.

$E(d)=\sum_{v \in V} \frac{1}{|V|} d(v)$.

So we sum over all people and count their friends, giving each count a weight $\frac{1}{|V|}$ since this is the probability that the given person was the randomly chosen person (the same for everyone in the network).

This can be simplified by the handshaking lemma, which notes that if we loop over all people in the network and add up their count of friends to a total, we will double-count the number of edges in the graph. Thus

$E(d)= \frac{2 |E|}{|V|}$.

Now, I will write the expected value of some characteristic $x$ of a neighbor of a randomly chosen person in the network as $\langle x \rangle_n$. This is computed as:

$\langle x \rangle_n = \dfrac{ \sum_{v \in V} d(v) x(v) } { \sum_{v \in V} d(v) }$.

The degree $d(v)$ appears in the top sum because vertex $v$ appears as a neighbor $d(v)$ times. Mathematically, this weight is where the idea of a sampling bias comes into play. The denominator is a normalization. Now it becomes clear that the expected number of friends of a neighbor is:

$\langle d \rangle_n = \dfrac{ \sum_{v \in V} d(v)^2 } { \sum_{v \in V} d(v) }$.

Using the relation between variance of a random variable $X$, $E([X - E(X)]^2)$, the expected value of $X$, and the expected value of $X^2$:

$E([X - E(X)]^2) = E(X^2) - E(X)^2$,

we can relate $\langle d \rangle_n$ to $E(d)$:

$\langle d \rangle_n = E(d) + E([d-E(d)]^2) / E(d)$

This ends the proof by noting the following. The expected number of friends of a randomly chosen individual $\langle d \rangle_n$ is larger than the expected number of friends in the graph $E(d)$ by an amount $ E([d-E(d)]^2) / E(d)$, which is positive. Thus, our friends likely have more friends than we do!

----------

Finally, what you've all been waiting for. A proof of the friends paradox. A network of friends can be represented as an undirected graph $G(V,E)$. This graph $G$ consists of a set $V$ of vertices, representing people, and a set $E$ of edges, representing friendships. An edge connects two vertices.

The only notation we need is:

$d(v)$: the degree of vertex $v$. (The number of friends that the person represented by the vertex $v$ has)

$|V|$: the total number of vertices (The number of people in our network)

$|E|$: the total number of edges in our network (The number of friendships).

What is the expected number of friends a person has in our network? This corresponds to the expected value of $d(v)$ of a randomly chosen person in the network.

$E(d)=\sum_{v \in V} \frac{1}{|V|} d(v)$.

So we sum over all people and count their friends, giving each count a weight $\frac{1}{|V|}$ since this is the probability that the given person was the randomly chosen person (the same for everyone in the network).

This can be simplified by the handshaking lemma, which notes that if we loop over all people in the network and add up their count of friends to a total, we will double-count the number of edges in the graph. Thus

$E(d)= \frac{2 |E|}{|V|}$.

Now, I will write the expected value of some characteristic $x$ of a neighbor of a randomly chosen person in the network as $\langle x \rangle_n$. This is computed as:

$\langle x \rangle_n = \dfrac{ \sum_{v \in V} d(v) x(v) } { \sum_{v \in V} d(v) }$.

The degree $d(v)$ appears in the top sum because vertex $v$ appears as a neighbor $d(v)$ times. Mathematically, this weight is where the idea of a sampling bias comes into play. The denominator is a normalization. Now it becomes clear that the expected number of friends of a neighbor is:

$\langle d \rangle_n = \dfrac{ \sum_{v \in V} d(v)^2 } { \sum_{v \in V} d(v) }$.

Using the relation between variance of a random variable $X$, $E([X - E(X)]^2)$, the expected value of $X$, and the expected value of $X^2$:

$E([X - E(X)]^2) = E(X^2) - E(X)^2$,

we can relate $\langle d \rangle_n$ to $E(d)$:

$\langle d \rangle_n = E(d) + E([d-E(d)]^2) / E(d)$

This ends the proof by noting the following. The expected number of friends of a randomly chosen individual $\langle d \rangle_n$ is larger than the expected number of friends in the graph $E(d)$ by an amount $ E([d-E(d)]^2) / E(d)$, which is positive. Thus, our friends likely have more friends than we do!

References

[1] http://opinionator.blogs.nytimes.com/2012/09/17/friends-you-can-count-on/?_php=true&_type=blogs&_r=0

[2] Feld, S. L. (1991). Why Your Friends Have More Friends than You Do. AJS,96(6), 1464-77.

[3] Ugander, J., Karrer, B., Backstrom, L., & Marlow, C. (2011). The anatomy of the facebook social graph. arXiv preprint arXiv:1111.4503.

[4] Eom, Y. H., & Jo, H. H. (2014). Generalized friendship paradox in complex networks: The case of scientific collaboration. Scientific reports, 4.

[5] Christakis, N. A., & Fowler, J. H. (2010). Social network sensors for early detection of contagious outbreaks. PloS one, 5(9), e12948.

[6] http://en.wikipedia.org/wiki/Friendship_paradox

[5] Christakis, N. A., & Fowler, J. H. (2010). Social network sensors for early detection of contagious outbreaks. PloS one, 5(9), e12948.

[6] http://en.wikipedia.org/wiki/Friendship_paradox

[7] http://www.psychologytoday.com/blog/the-scientific-fundamentalist/200911/why-your-friends-have-more-friends-you-do

Subscribe to:

Posts (Atom)